There are people who fear that artificial intelligence will render human beings irrelevant in the workforce. Denise Kleinrichert is not one of those people.

A management professor at San Francisco State University, Kleinrichert predicts that the use of AI will become as common as the use of cell phones, and that organizational departments to oversee AI’s use will become as ordinary as human resources departments.

“Is it going to completely replace all human beings? I can’t foresee that in our lifetime. Or a future lifetime,” she said. “It’s just changing the way we do business.”

But for that rosy future to become reality, Kleinrichert said, employees must learn how AI works, where it is biased, when it might threaten privacy and how to recognize when an AI agent’s output is just plain wrong.

That’s why she is teaching a course in AI ethics and compliance as part of San Francisco State’s graduate certificate in ethical artificial intelligence, which has been offered since 2019. “Employers are looking for employees who have some savviness about what is ethical and what isn't when it comes to AI,” she said. “We need to prepare our students.”

This is driving the popularity of courses, certificates and master’s programs focused on AI ethics. Some are designed for students with little or no computer science background. Others focus on how to use AI in a specific field. But at the core of each program is an emphasis on avoiding harm.

“AI concerns everybody,” said Sonja Schmer-Galunder, an AI and ethics professor at the University of Florida. “We need to provide a more holistic education that is focusing on how we can do this safely and ethically.”

What’s an AI ethicist?

A 2025 report from the labor market analysis organization Lightcast found that job postings requiring generative AI skills for nontechnical roles including healthcare, hospitality, education and finance grew ninefold between 2022 and 2024. And a growing number of jobs specifically require expertise in AI ethics — meaning whether the technology is being used responsibly and fairly within an organization or business.

More than 100,000 jobs for employees with expertise in AI ethics are now posted each year, according to a 2025 study. And while many technical skills can be learned on the job, AI ethics is highly interdisciplinary — touching on data science, business and philosophy.

Anyone using AI must consider the risks versus the benefits of relying on the tool. But for some, assessing an AI tool’s ethical reliability is the core of their job.

AI ethicists are tasked with making sure that their employer’s use of AI aligns with the law and the organization's own code of conduct. Ethicists combine knowledge about how AI works with basic ethical principles, and they are often charged with running AI tools through trustworthiness audits.

They can follow best practices for doing these audits, including a federal policy framework focused on cybersecurity and more general guidelines from the Institute of Internal Auditors. Generally, the audits focus on basic questions: How will the risks of using AI be addressed? Is the data used to train the AI models accurate? Are AI tools tested before they are deployed?

Some academic ethics programs even require students to design their own AI tools and then audit them.

“If they develop a medical application, they have to discuss the impact of any errors the system might make,” said Dragutin Petkovic, who teaches the computer science course in San Francisco State’s AI ethics certificate program. “What if it hallucinates? How will that impact the patients?”

Among the questions Petkovic wants his students to ask: Can a human pull the plug on the AI tool in case of a medical emergency? Anticipating a worst-case scenario is part of an ethicist’s job, he said.

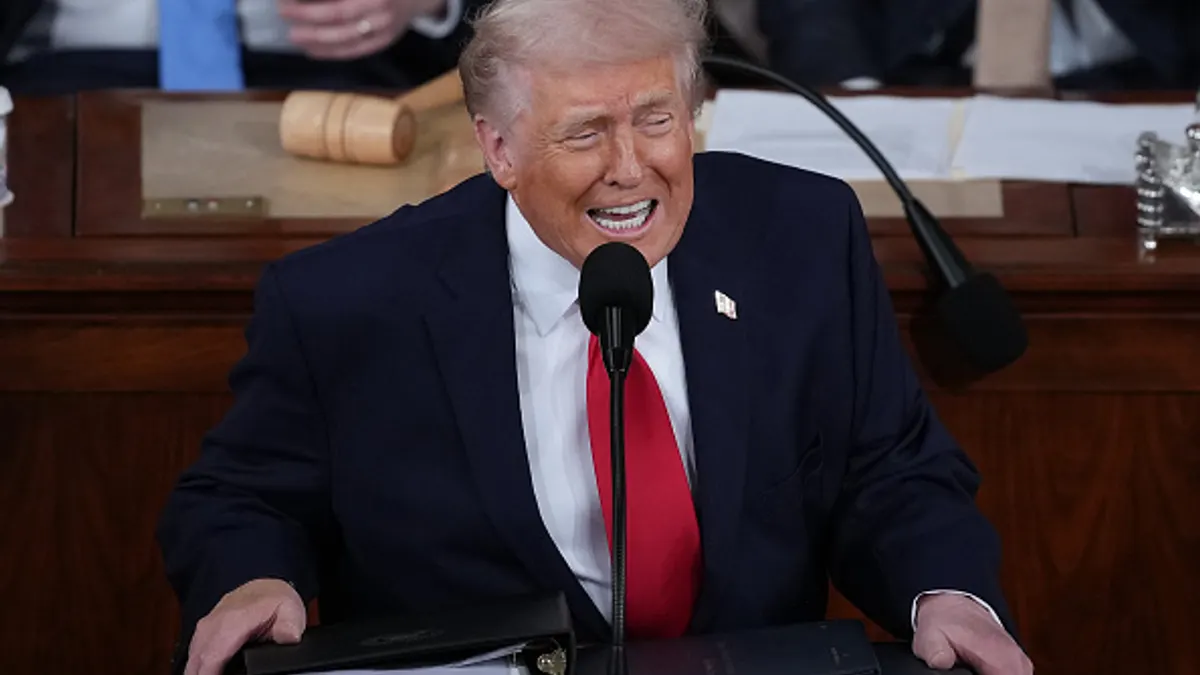

Government regulation adds a statutory layer that ethicists must also monitor. In December, President Donald Trump signed an executive order calling for a federal AI policy. Last month, the White House released a uniform national framework for AI via legislative recommendations to Congress that call for preempting separate state regulations.

Regulating use of the technology is important, said Schmer-Galunder, who teaches an AI ethics course for technology leaders that is part of a master’s degree in AI systems. It helps guard against harm while potentially codifying a society’s goals for how AI can be a benefit. Organizations that use AI, she said, should be deliberate about how AI can help solve society’s problems.

“Whatever we are trying to develop or do or deploy, we should ask whether it is going to lead to human flourishing or clean water or know a positive societal outcome,” she said. This is a challenge in profit-focused corporate settings, she said.

In her course, however, students discuss ways that regulation could incentivize ethical AI as a competitive advantage.

Her students work in groups and role-play positions that are common at technology companies: program manager, user experience, budget, technical implementation and ethicist.

Together, they discuss product development scenarios and the potential risks of releasing AI-integrated products without sufficient testing. Schmer-Galunder, who is developing a separate multidisciplinary master’s degree focused exclusively on AI ethics, cited chatbots as an example.

“We have seen that they can have severe consequences when people are starting to not just anthropomorphize, but have distorted visions of reality,” she said. When AI chatbots were new, she said, developers discussed how to control aspects of a bot’s personality. But when a 14-year-old in Florida died by suicide in 2024 after allegedly falling in love with a chatbot and isolating himself from others, parent groups blamed the human-like AI bot.

“I would like to see an increased awareness of that engineering mindset of building something and putting it out there and then seeing what happens,” Schmer-Galunder said, “so there's awareness of being responsible for what you're doing and that you can be the voice of reason.”

The limits of AI

Many academic programs engage philosophy professors to examine AI and poke holes in assumptions about how it works. Even the term “artificial intelligence” is worth questioning, as it can raise expectations about what AI is capable of, said Jay Gupta, a philosophy professor at Northeastern University, in Boston, who teaches an undergraduate course in technology and human values.

“How appropriate is it to read things like autonomy into the performance of AI systems, even as we refer to autonomous vehicles,?” he said, noting that a truly autonomous vehicle could decide to go wherever it wanted. “The language we use can blur, rather than clarify, important distinctions between human cognition and computational processes.”

AI ethicists, Gupta said, “should retain a philosophical awareness concerning the ambiguity of such terms.”

“AI concerns everybody. We need to provide a more holistic education that is focusing on how we can do this safely and ethically.”

Sonja Schmer-Galunder

AI and ethics professor, the University of Florida

It is also true that AI tools make many jobs easier, particularly when web-based research is involved. Matt Cordon teaches a course in AI ethics and law at Baylor University in Waco, Texas. Other courses in Baylor’s certificate series focus on AI in healthcare and business.

Cordon said that when attorneys write pleadings or memoranda, the manual research can be time-consuming. Using an AI tool to find case citations is efficient — but it also presents big risks.

“It cites a case, and it gives you a case name and a jurisdiction. But you go to look that case up, and it doesn't exist. It's never existed,” he said. “Lawyers are being sanctioned for copying and pasting these responses into their filings. The profession is being challenged with that problem.”

An example of this took place last year in California, where an attorney was fined $10,000 for filing an appeal that was filled with fake AI-generated quotes. In September, the state’s Judicial Council issued guidelines requiring court staff to either ban the use of AI or develop their own policy for its use.

Cordon's students include lawyers, paralegals and others with an interest in how to use AI the most effectively — and without making egregious errors. He said that besides offering false information, such as cases that don’t exist, AI can also create crucial errors related to language.

Successful legal writing requires the use of precise language, he said, and AI tools just aren’t capable of understanding precision in specific contexts. The words “vague” and “ambiguous,” for example, can be used interchangeably in most situations, he said. But in legal contexts, they have different meanings. A statute that is “vague,” for instance, could be declared void because it doesn’t specify what is illegal. Something that is legally “ambiguous” is simply open to different interpretations.

“Large language models are trained on generally accessible data on the internet, and the internet's not always going to make a distinction between the meaning of ‘vague’ and ‘ambiguous,’” Cordon said. “Artificial intelligence doesn't necessarily recognize all of that nuance. The human has to recognize that. AI won't catch its own mistakes.”

In an increasingly AI-embedded workforce, employees need the skills to think critically about how artificial intelligence is used and whether its output is accurate.

“Health companies and banks, they need people to be educated. That is the purpose of our certificate,” said San Francisco State’s Petkovic. “Also, people in decision-making roles in state or federal government need some education about how it works.”